GitHub - KAUST-Academy/tensorflow-gpu-data-science-project: Template repository for a Python 3-based (data) science project with GPU acceleration using the TensorFlow ecosystem.

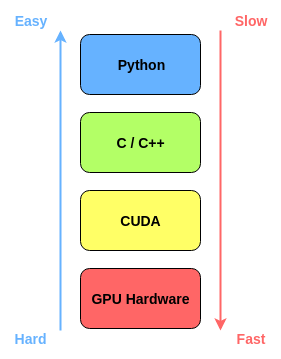

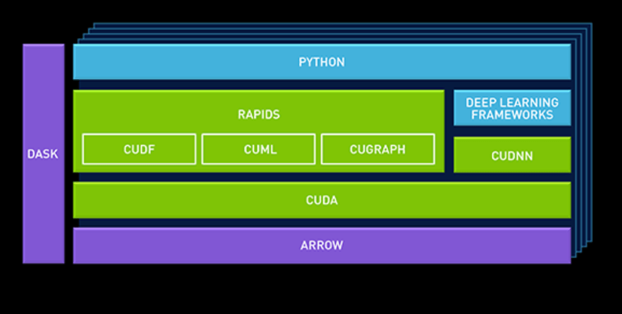

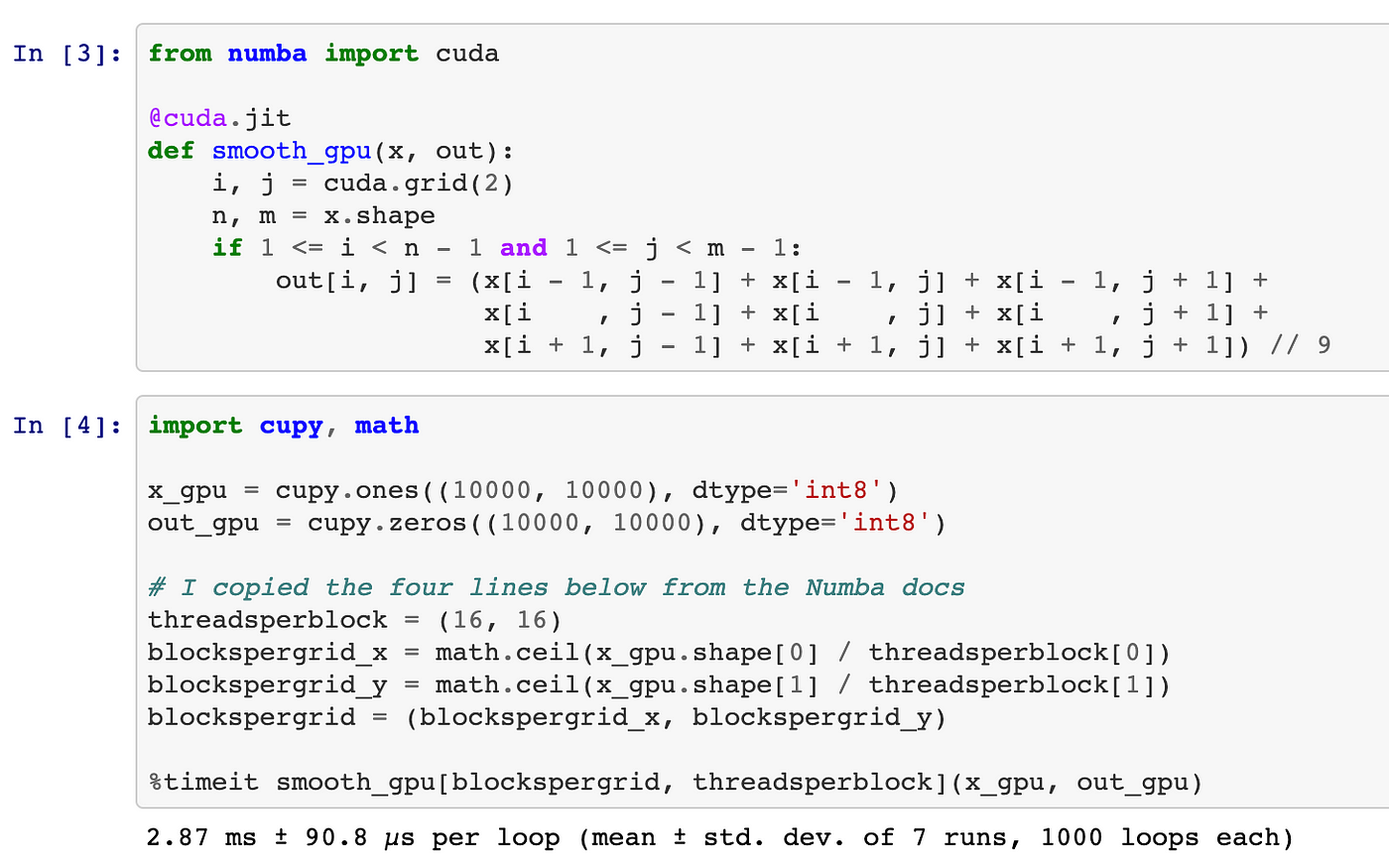

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

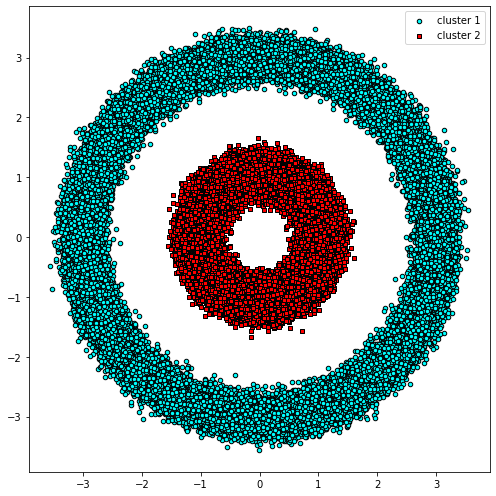

3.1. Comparison of CPU/GPU time required to achieve SS by Python and... | Download Scientific Diagram

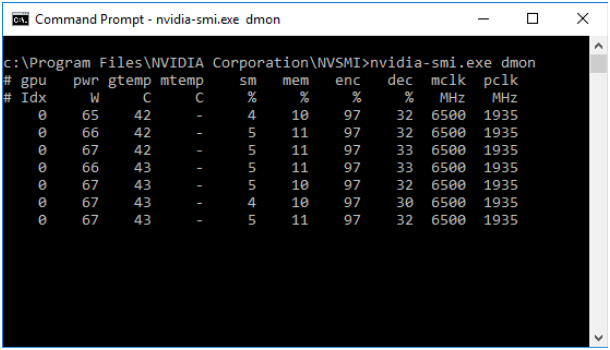

How to build and install TensorFlow GPU/CPU for Windows from source code using bazel and Python 3.6 | by Aleksandr Sokolovskii | Medium

GitHub - meghshukla/CUDA-Python-GPU-Acceleration-MaximumLikelihood-RelaxationLabelling: GUI implementation with CUDA kernels and Numba to facilitate parallel execution of Maximum Likelihood and Relaxation Labelling algorithms in Python 3