Brian2GeNN: accelerating spiking neural network simulations with graphics hardware | Scientific Reports

How to Use GPU in notebook for training neural Network? | Data Science and Machine Learning | Kaggle

GitHub - zylo117/pytorch-gpu-macosx: Tensors and Dynamic neural networks in Python with strong GPU acceleration. Adapted to MAC OSX with Nvidia CUDA GPU supports.

A Complete Introduction to GPU Programming With Practical Examples in CUDA and Python - Cherry Servers

How to Set Up Nvidia GPU-Enabled Deep Learning Development Environment with Python, Keras and TensorFlow

Training Deep Neural Networks on a GPU | Deep Learning with PyTorch: Zero to GANs | Part 3 of 6 - YouTube

GitHub - pytorch/pytorch: Tensors and Dynamic neural networks in Python with strong GPU acceleration

Why is the Python code not implementing on GPU? Tensorflow-gpu, CUDA, CUDANN installed - Stack Overflow

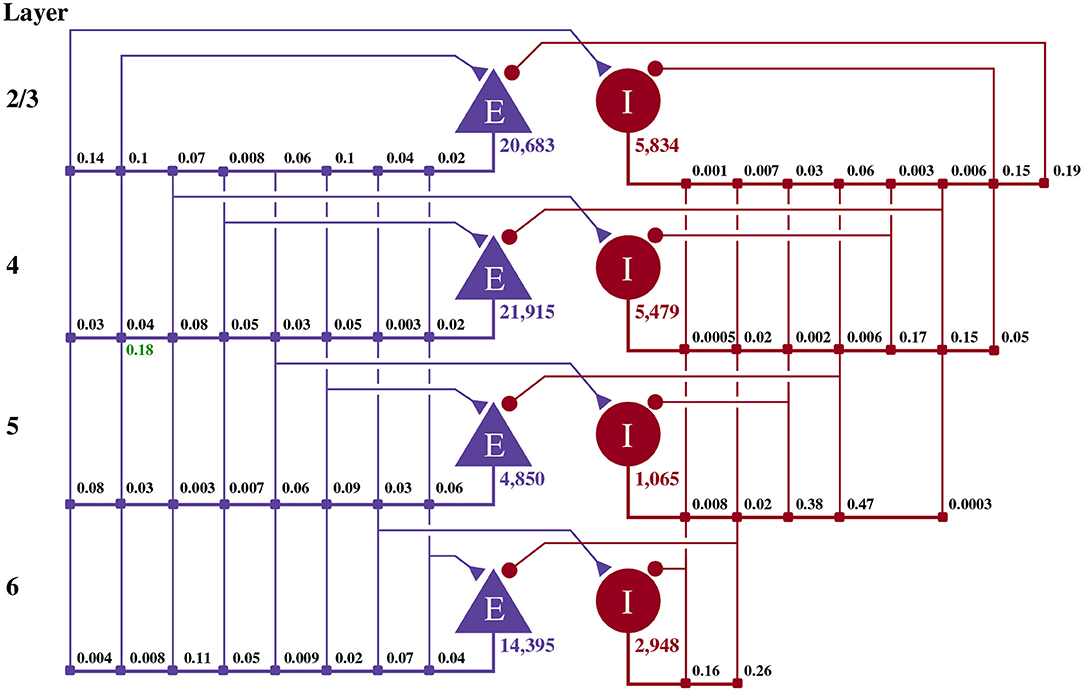

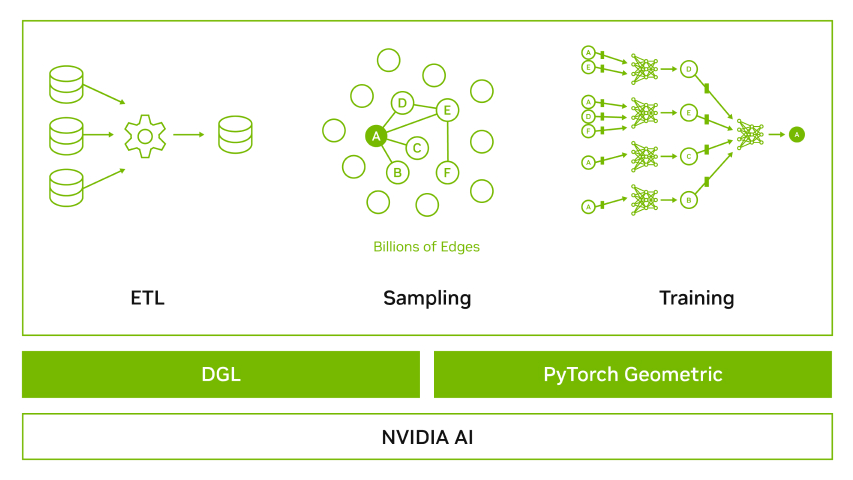

Optimizing Fraud Detection in Financial Services with Graph Neural Networks and NVIDIA GPUs | NVIDIA Technical Blog